HappyHorse vs Seedance 2.0: The Ultimate Comparison of Leading AI Video Generators 2026

The AI video generation landscape just experienced a seismic shift. On April 7, 2026, a mysterious model called HappyHorse-1.0 appeared on the Artificial Analysis Video Arena leaderboard and immediately claimed the #1 spot, dethroning ByteDance’s Seedance 2.0—the previously undisputed leader in AI video generation.

This comprehensive comparison goes beyond the hype. We analyze the actual performance differences, and most importantly, answer the critical question: which model can you actually use today? We’ve supplemented our analysis with technical architecture breakdowns, real user feedback from Reddit and X (Twitter), and detailed access path comparisons.

Key Findings:

- HappyHorse-1.0 leads in visual motion quality for silent video (60-point Elo advantage).

- Seedance 2.0 maintains superiority in audio-video synchronization and production accessibility.

- The “leaderboard winner” and “production-ready model” are currently two different questions.

Overview of HappyHorse-1.0 and Seedance 2.0

Understanding the fundamental philosophy and development background of each model reveals why they excel in different areas.

HappyHorse-1.0: The Mysterious Open-Source Challenger

HappyHorse 1.0 made a surprising debut on Artificial Analysis Text to Video Leaderboard (No Audio), quickly gaining attention despite the absence of verified team attribution or corporate backing.

The model claims a 15-billion-parameter unified single-stream Transformer architecture that processes text, video, and audio tokens in one sequence. On paper, it promises:

- Native support for 7 languages (English, Mandarin, Cantonese, Japanese, Korean, German, French)

- Industry-leading lip-sync with low word-error-rate

- Full open-source release with commercial licensing

However, as of April 9, 2026, the GitHub repository, HuggingFace model weights, and official documentation remain marked “coming soon”—creating a significant gap between claimed capabilities and verified accessibility.

Seedance 2.0: The Established Commercial Leader

Seedance 2.0 represents ByteDance’s mature entry into AI video generation, developed by the Seed research team. It’s the latest evolution from Pixeldance through Seedance 1.0 and 1.5 Pro.

Seedance 2.0 is architected around a Dual-Branch Diffusion Transformer—one branch dedicated to video frame generation, the other to audio waveform generation, connected via cross-attention for millisecond-level synchronization. This architectural choice was purpose-built for native audio-video sync.

Key verified capabilities:

- Quad-modal input system (text, image, video, audio)

- Multi-shot storyboarding with narrative planning

- 2K cinema-grade output with 24-60 fps support

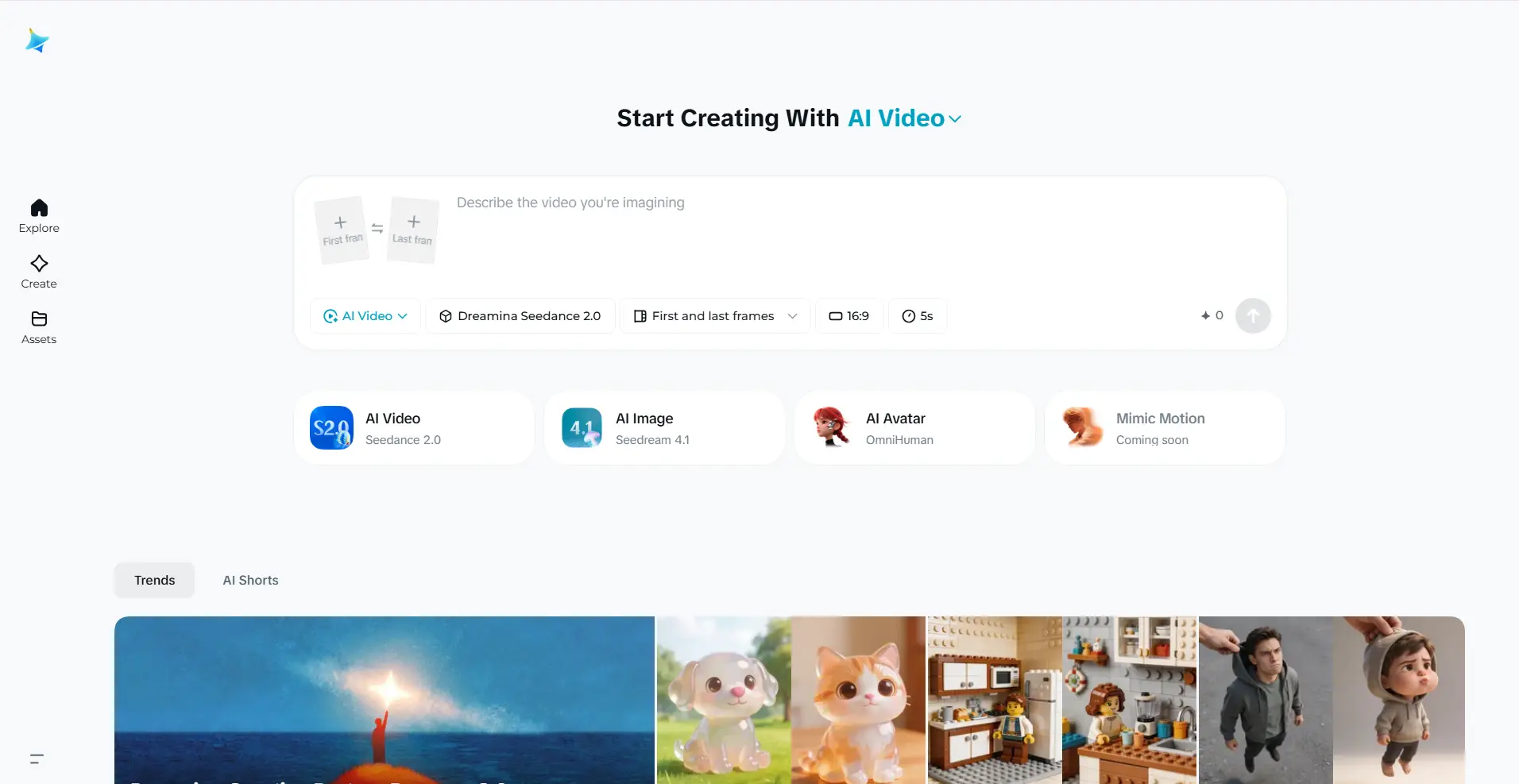

- Accessible via Dreamina (international) and Jimeng (China mainland)

Detailed Comparison: HappyHorse vs Seedance 2.0

The most effective way to understand these models is through a comprehensive feature-by-feature analysis based on verified data from Artificial Analysis, community testing, and official documentation.

| Feature | HappyHorse-1.0 | Seedance 2.0 | Key Distinction |

|---|---|---|---|

| Developer | Unknown team (linked to Alibaba/Kuaishou alumni) | ByteDance (Seed Research Team) | Seedance has verified provenance |

| Text to Video Elo (no audio) | 1385 (#1) | 1273 (#2) | HappyHorse leads by 112 points |

| Text to Video Elo (with audio) | 1225 (#2) | 1230 (#1) | Seedance wins when audio matters |

| Architecture (claimed) | 40-layer unified Transformer | Dual-Branch Diffusion Transformer | Different technical approaches |

| Audio Generation | Present, trails Seedance | Native dual-branch sync | Seedance architecturally stronger |

| Open Source Status | ”Coming soon” (unverified) | Closed-source | Promises vs. reality gap |

| API Availability | No stable API | Dreamina access; BytePlus API paused | Seedance functionally accessible |

| Sample Size | Unknown (recently added) | 7,500+ votes in T2V | Seedance has more established data |

| Max Resolution | Claimed 1080p native | 2K (up to 3840×2160) | Seedance offers higher verified output |

| Multi-shot Logic | Unknown | Native narrative planner | Seedance confirmed capability |

| Commercial Licensing | Claimed open (unverified) | Proprietary with paid tiers | Different business models |

Comparison by Video Generation Scenario

The practical differences between these models become clear when examining specific use cases.

1. Silent Visual Content (Product Demos, B-Roll, Social Clips)

Winner: HappyHorse-1.0

The 112-point Text to video no-audio lead reflect genuine user preference for visual motion quality in silent scenarios. Community feedback on X consistently describes HappyHorse outputs as having:

- More natural camera drift

- Smoother body movement transitions

- Stronger atmospheric coherence

- Better subject-background integration

Best Use Cases:

- Product showcase loops for e-commerce

- Social media B-roll for music-first edits

- Concept animation from reference images

- Visual mood boards and pitch decks

Caveat: You cannot currently access HappyHorse through any production-ready API. All testing must occur through third-party demo sites with unknown terms of service.

2. Audio-Visual Synchronized Content

Winner: Seedance 2.0

Seedance leads on Image to video with audio (1-point margin, effectively tied). The Dual-Branch Diffusion Transformer architecture generates video frames and audio waveforms simultaneously in a single pass, enabling:

- Frame-accurate sound effects (footsteps land when feet hit ground)

- Synchronized dialogue with proper lip-sync

- Ambient audio that matches scene dynamics

- Multi-language phoneme-level lip synchronization

Best Use Cases:

- Dialogue-driven narrative scenes

- Product demos requiring voiceover

- Music video concepts with beat matching

- Training videos with instructional audio

Example from DataCamp Testing: Seedance 2.0’s background music adapts in real-time to character emotions—starting sad and calm, then shifting to horror-like tones as the character screams into a mirror, without interfering with the scream audio or mirror-grabbing sound effects.

3. Image-to-Video (Reference Image Animation)

Winner: HappyHorse-1.0 (no audio)

HappyHorse’s Image to Video Elo of 1392 (no audio) is the highest score across all categories on the leaderboard. This suggests exceptional ability to:

- Maintain subject identity from reference image

- Preserve composition and framing choices

- Follow reference style and visual coherence

- Generate natural motion that respects input constraints

Best Use Cases:

- Brand character animation from static assets

- Product photography brought to life

- Storyboard frame-to-motion conversion

- Concept art animation for pitch presentations

Critical Limitation: Without stable API access, you cannot build reliable production workflows around HappyHorse’s Image to video capabilities, regardless of quality superiority.

4. Multi-Shot Narrative Sequences

Winner: Seedance 2.0 (verified capability)

Seedance 2.0 includes a narrative planner module that automatically:

- Breaks single prompts into distinct camera shots

- Selects appropriate shot types (wide, medium, close-up)

- Maintains character/scene consistency across cuts

- Adds natural transitions between shots

Example (from X/Twitter user @cfryant): The Avengers Endgame parody prompt automatically generated: wide shot establishing the battlefield → zoom on Thanos → tilt to Thor → hard cut to Spiderman—all with coherent Marvel-like cinematography, without explicit camera direction in the prompt.

HappyHorse’s multi-shot capabilities remain unverified in documentation.

5. Production Deployment Today

Winner: Seedance 2.0 (only accessible option)

Seedance 2.0 Access Paths:

- Dreamina platform (international): $18/month starting tier

- CapCut Pro integration (select markets)

- Jimeng (China mainland): 69 RMB/month (~$9.60 USD)

- Third-party providers preparing API endpoints

HappyHorse-1.0 Access Paths:

- Official API: None (as of April 9, 2026)

- GitHub weights: Marked “coming soon”

- Demo sites: Third-party wrappers with unknown SLAs

- Production pricing: Undocumented

For any workflow that requires consistent, reliable access at scale—Seedance 2.0 is currently the only viable choice between these two models.

Note: “Leaderboard #1” ≠ “Production Ready”

- Elo tells you: Which model users prefer in controlled blind comparisons

- Elo doesn’t tell you: Whether you can get 10,000 generations next Tuesday without a 503 error

HappyHorse might genuinely produce better silent video. But if you can’t call it reliably, that quality advantage exists only in the arena, not in your pipeline.

Decision Framework: Which Model Should You Choose?

Choose Seedance 2.0 If:

✅ Audio quality is non-negotiable → It leads on both with-audio leaderboards and generates synchronized sound natively

✅ You need multi-shot narrative sequences → Verified storyboarding with automatic shot breakdown

✅ Production reliability matters → Functional access via Dreamina with documented pricing

✅ You want quad-modal reference control → Upload images/videos/audio and assign specific roles

✅ You’re building for clients or commercial use → Known team, clear licensing, corporate support

Best Use Cases: Marketing videos with voiceover, dialogue-driven content, multi-shot commercial productions, client deliverables requiring reliability

Monitor HappyHorse If:

✅ Visual motion fidelity is your top priority → The no-audio Elo leads reflect genuine quality in silent scenarios

✅ You’re willing to wait for infrastructure → If open weights and API materialize, the quality advantage becomes actionable

✅ You work with silent video workflows → Product loops, B-roll, social clips edited with separate audio

✅ You have in-house deployment capability → If/when weights release, you can self-host

Current Reality: Interesting for testing, not ready for production dependencies. The gap between leaderboard performance and usable infrastructure remains significant.

Use Both When Available:

If HappyHorse’s promised open-source release materializes with functional infrastructure, the optimal strategy may become:

- HappyHorse for silent visual content (product demos, B-roll, atmospheric scenes)

- Seedance 2.0 for audio-critical content (dialogue, voiceover, synchronized music)

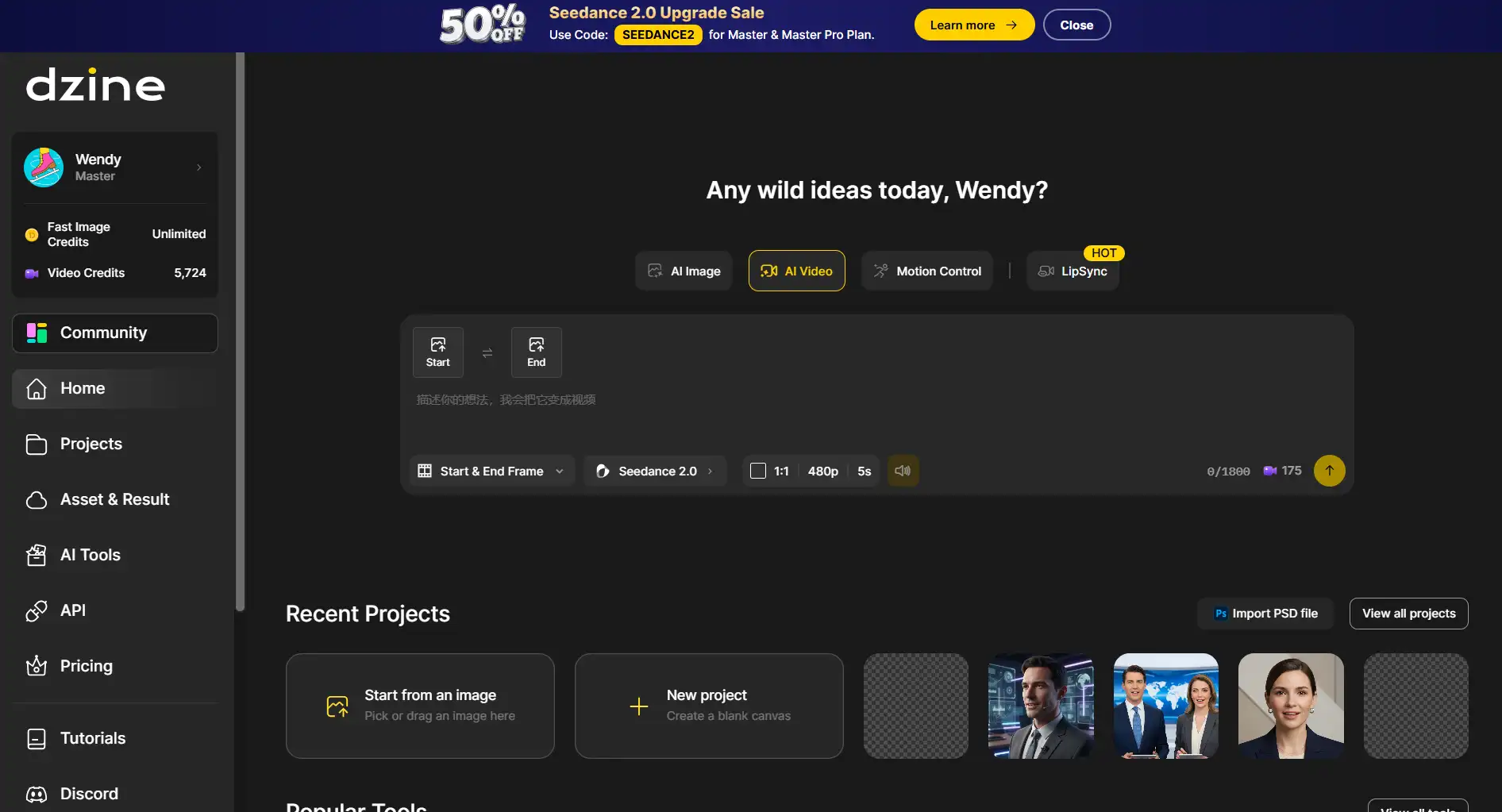

- Platform integration through Dzine or similar hubs to access both seamlessly

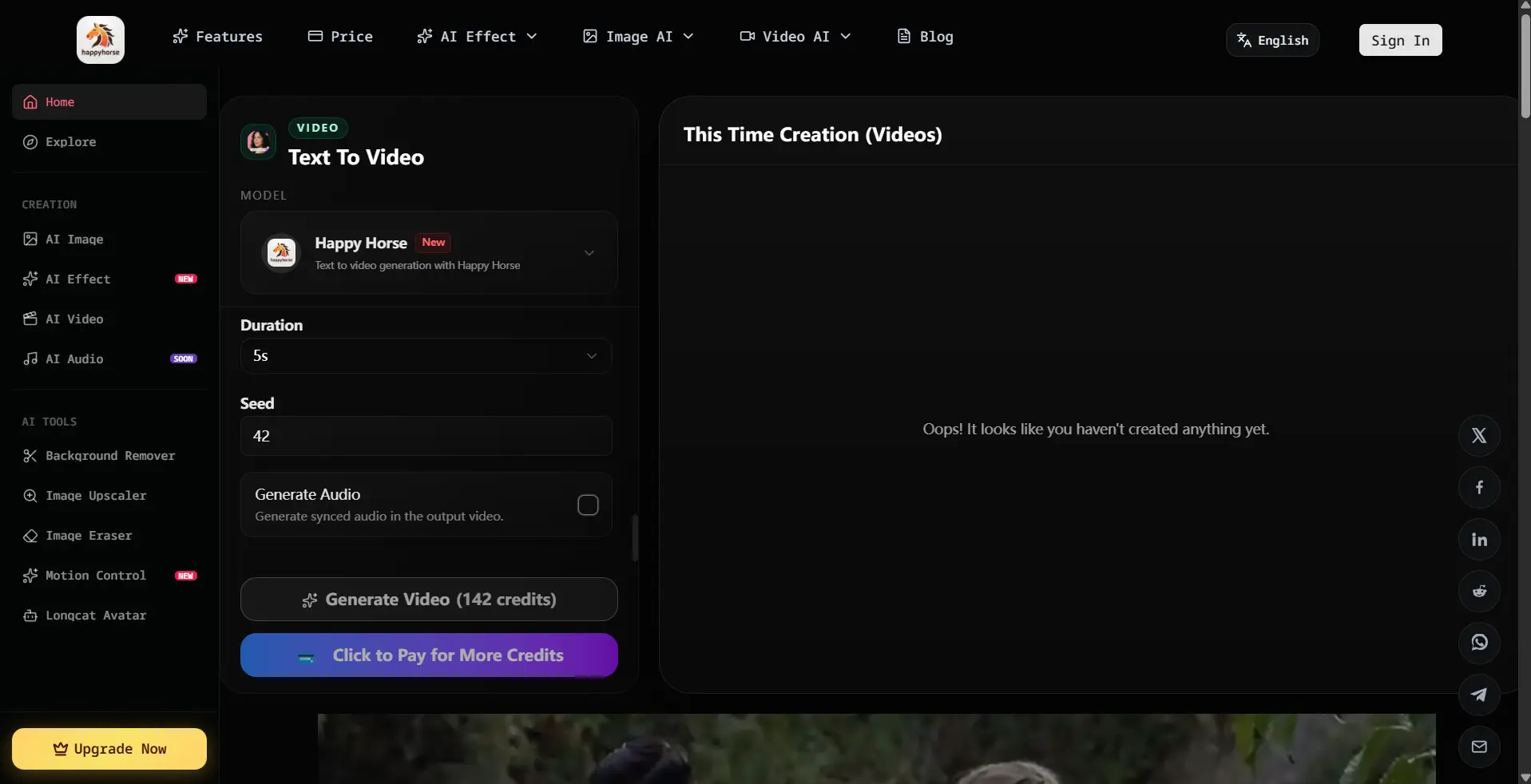

How to Use Happyhorse/Seedance 2.0

When working with Happyhorse 2.0 and Seedance 2.0, you don’t have to pick just one. Platforms like Dzine bring both tools together in one place, giving users the flexibility to use them side by side and make the most of what each has to offer.

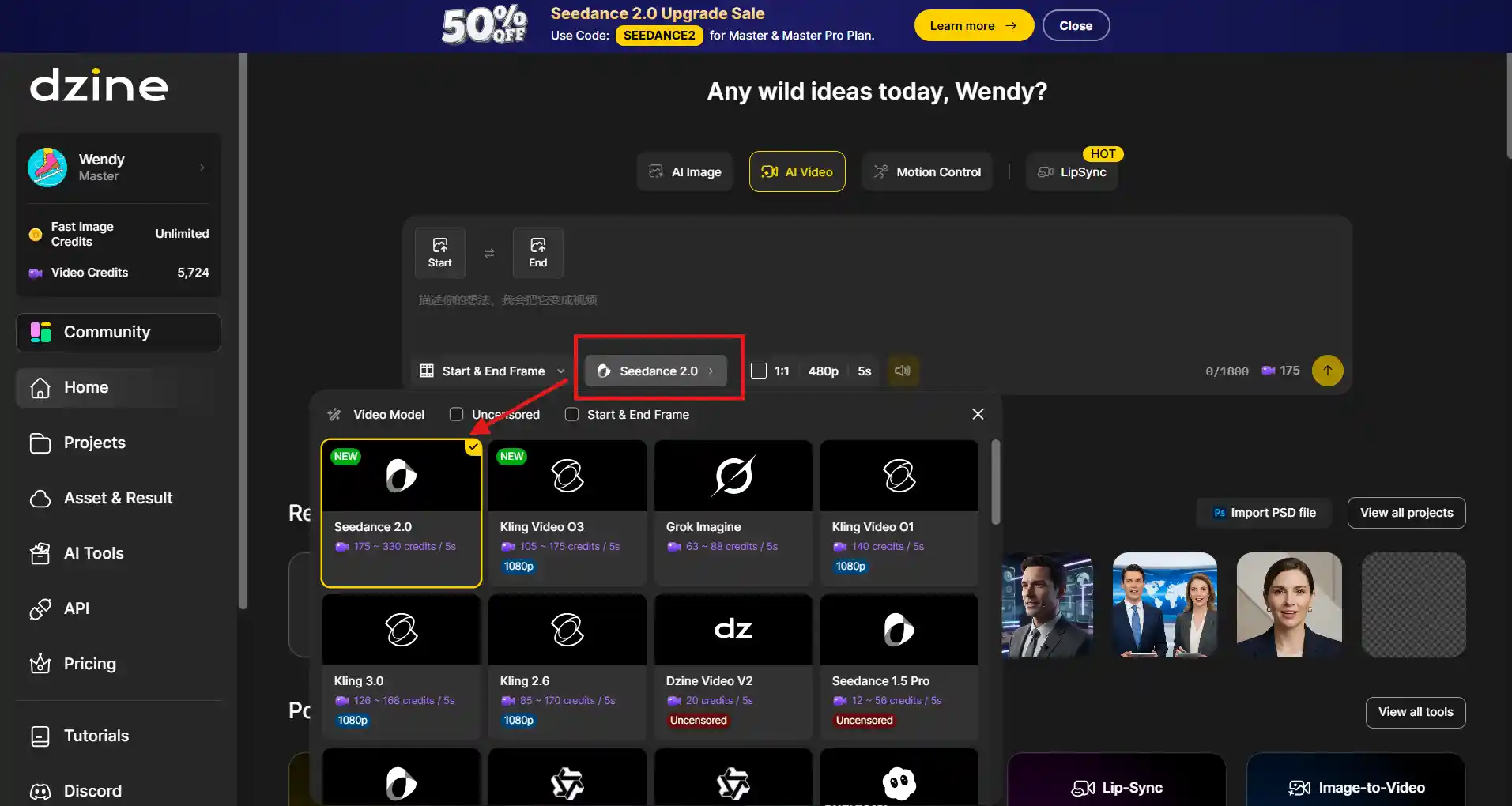

Step 1: Launch the AI Video tool on Dzine AI.

Step 2: Click the drop-down menu to choose Seedance 2.0 or HappyHorse (coming soon).

Step 3: Enter the prompt and upload the reference images. Then, click Generate.

Step 4: Next, you can click Download to save the image.

Limitations and Known Issues

HappyHorse-1.0 Limitations

Access and Infrastructure:

- No stable API for production use

- GitHub and HuggingFace links still inactive

- Third-party demos only—no official interface

- Unverified open-source claims

Technical (Reported by Community):

- Character detail sometimes trails Seedance 2.0

- Dynamic coherence issues in complex scenes

- Limited testing data (newer addition to arena)

- Possible portrait-bias in evaluation samples

Legal and Licensing:

- Unknown commercial use terms

- No verified IP protection policies

- Uncertain long-term support model

Seedance 2.0 Limitations

Access Complexity:

- Official API paused (copyright disputes)

- Consumer access functional but not programmatic

- Third-party API options require verification

- China-specific access requires local payment

Technical (Reported by DataCamp/Users):

- Complex glass layering can cause scene movement issues

- Background text occasionally pixelated in fast motion

- Minor character misidentification in crossover scenarios

- Music performance scenes retain slight uncanny valley feel

Cost Considerations:

- Paid tiers required for regular use

- No comprehensive free tier for extended testing

- API pricing uncertain pending third-party agreements

Conclusion: Two Models, Two Paths

HappyHorse-1.0 and Seedance 2.0 each have their strengths, but they cater to different needs. HappyHorse-1.0 excels in visual content with no audio, offering superior motion quality and atmospheric coherence, making it the preferred choice for silent videos (based on current Elo scores).

However, Seedance 2.0 is the go-to model for reliable, production-ready work. With fully accessible features like the narrative planner and dual-branch audio system, it’s the only option for workflows requiring consistent, repeatable outputs.

Practical Approach:

- Use Seedance 2.0 for audio-driven or complex projects.

- Test HappyHorse for visual-focused content in demo scenarios.

- Stay updated on HappyHorse for open-source releases and future infrastructure changes.

- Leverage both models through integrated platforms like Dzine for flexible workflows.

By understanding their unique strengths, you can make the right choice for your creative needs without getting caught up in a simple “which is better” comparison.

FAQ: HappyHorse vs Seedance 2.0

1. Is HappyHorse-1.0 actually better than Seedance 2.0?

It depends entirely on your measurement criteria. HappyHorse leads in visual quality for no-audio scenarios (Elo 1385 vs 1273 for T2V, 1392 vs 1355 for I2V). Seedance leads when audio quality and synchronization matter. Neither dominates all categories. “Better” only makes sense relative to your specific use case and whether audio is part of your deliverable.

2. Why does HappyHorse lead without audio but trail with audio?

Likely architectural differences. HappyHorse claims a unified single-stream Transformer processing all modalities together. Seedance uses a purpose-built dual-branch design where separate video and audio branches connect via cross-attention. That specialized audio branch appears to give Seedance an edge when sound quality and frame-accurate sync are being evaluated alongside visuals.

3. Can I access HappyHorse-1.0 via API today?

No. As of April 9, 2026, there is no stable, documented API endpoint for HappyHorse-1.0. Multiple wrapper sites offer browser-based demo access, but none publish API documentation, rate limits, production-grade SLAs, or clear pricing you could build a product around. The official GitHub and model hub are both listed as “coming soon.”

4. How reliable is the Artificial Analysis leaderboard for production decisions?

The Artificial Analysis Video Arena is the most credible crowdsourced signal for perceived video quality—it uses blind votes, Elo-based ranking, and real human preferences without lab self-reporting. However, it measures ONE thing: which output do users prefer in side-by-side comparison. It doesn’t account for generation speed, cost per clip, API reliability, uptime, or access stability. Use it as a quality input, not as a complete procurement decision framework.

5. Will HappyHorse-1.0 get audio improvements in future versions?

Unknown. No public roadmap exists. The model appeared less than a week ago under a pseudonym. If the promised open-source release happens, community contributions could improve audio quality through fine-tuning or architecture modifications. But there’s no timeline, no confirmed development team statement, and no announced v2 plans. Anything beyond current leaderboard performance is speculation.

6. Which model is better for image-to-video conversion?

HappyHorse-1.0 leads significantly in I2V without audio (Elo 1392 vs 1355), suggesting stronger reference image following and composition preservation. For silent product animations or brand character motion, HappyHorse shows user preference advantages. With audio included, scores are statistically tied (1-point difference). However, you cannot currently access HappyHorse’s I2V capabilities through production-ready infrastructure.

7. Is HappyHorse-1.0 really open source?

Not yet verified. The official sites claim “fully open source” with complete model weights, inference code, and commercial licensing. However, as of April 9, 2026, the GitHub repository link returns “coming soon,” the HuggingFace model hub link returns “coming soon,” and no downloadable weights or license files are publicly accessible. The claim exists, but the implementation does not—yet.