HappyHorse-1.0: The Mysterious AI Video Model Topping the Charts in 2026

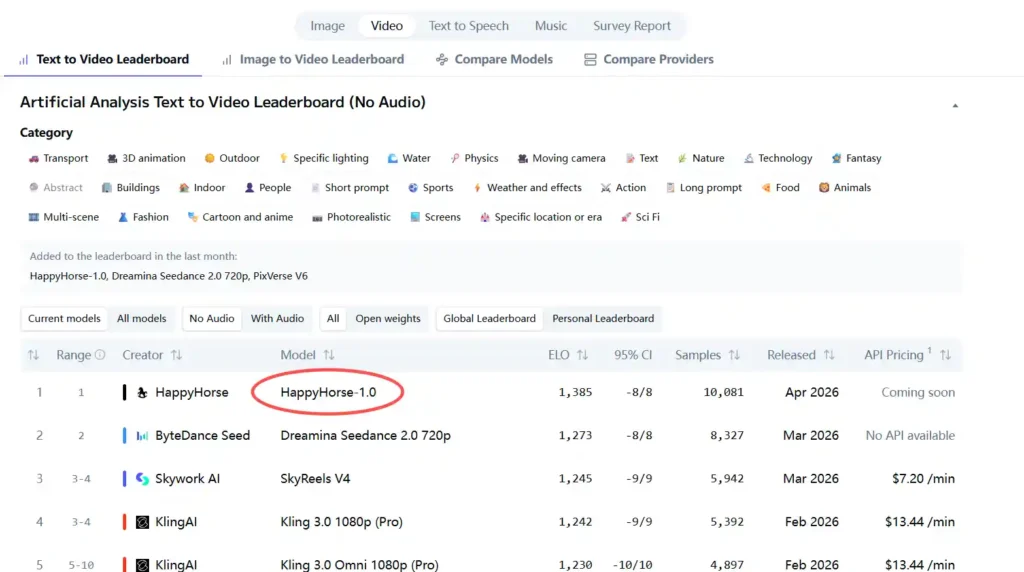

A mysterious AI model has suddenly claimed the top spot, shaking up the entire AI video industry. In April 2026, HappyHorse-1.0 quietly topped the Artificial Analysis Video Arena leaderboard, surpassing established players like Seedance 2.0, Kling 3.0, and PixVerse V6 with an Elo rating of around 1333+.

No press conference, no official endorsement, not even clear information about its development team - this “dark horse” has left the industry questioning: what exactly is HappyHorse-1.0, and how did it achieve such a rapid rise?

This article from Dzine AI will comprehensively decode the true face of HappyHorse-1.0, covering its technology, open-source status, competitor comparisons, and practical value, helping you grasp the latest trends in AI video creation.

What Is HappyHorse-1.0?

HappyHorse-1.0 is an AI model focused on multimodal video generation , with two core functions that stand out in the market.

First, it supports Text-to-Video (T2V), allowing users to generate high-quality dynamic videos simply by inputting text descriptions. Second, it offers Image-to-Video (I2V), expanding static image materials into vivid video content.

Its most eye-catching feature is native audio-video synchronization. Unlike traditional AI video models that often suffer from “video without sound” or “audio-visual mismatch,” HappyHorse-1.0 achieves precise alignment between lip movements and sound effects without post-dubbing.

In terms of architecture, the model adopts a pure self-attention Transformer structure with approximately 15**** billion parameters and a 40-layer network design. It processes four modal data - text, image, video, and audio - through unified encoding, enabling efficient alignment of cross-modal information.

Why HappyHorse-1.0 Is Trending

HappyHorse-1.0’s ability to overtake competitors in a short period is not accidental - it relies on three core advantages:

1. Targeted optimization for evaluation scenarios : It adjusts its generation strategy based on the characteristics of blind test samples, performing exceptionally well in portrait and dubbing content (accounting for over 60% of test cases), which significantly improves its blind test win rate.

2. Lightweight inference design : Using DMD-2 distillation technology, it compresses the denoising step to 8 steps and removes the CFG module, reducing computational load by nearly half and significantly improving generation efficiency.

3. Joint audio-video modeling : Traditional models process audio and video in separate steps, leading to errors. HappyHorse-1.0 uses a single-stream architecture for synchronous optimization, making it more likely to win user favor in blind tests.

Core Capability Breakdown: Beyond the Ranking

Video Quality & Generation Speed

HappyHorse-1.0 excels in generating 1080p high-definition videos, with clear details, natural motion transitions, and no obvious frame skipping or blurring - problems that plague many mid-tier AI video models.

Its inference speed is even more remarkable: it can generate a 1080P short video (15-30 seconds) in about 38 seconds , which is 30%-40% faster than Seedance 2.0 and PixVerse under the same hardware conditions.

Audio-Video Synchronization & Multilingual Support

As mentioned earlier, its native audio-video synchronization is a major highlight. It can automatically generate matching sound effects and dubbing based on video content, with lip-sync accuracy of over 90% in English and Chinese.

Additionally, it supports multilingual text input (English, Chinese, Spanish, French, etc.), making it suitable for global content creators to use without language barriers.

Open Source in Name Only? The Truth Behind “Coming Soon”

One of the most controversial points about HappyHorse-1.0 is its “open-source” claim. The official team stated that the model will be open source, but as of April 2026, there is still no real release on GitHub and HuggingFace - only a “coming soon” prompt.

This raises a key question: what is the difference between “nominal open source” and “actually usable open source”? For most developers and content creators, open source means free access to code, model weights, and deployment tools.

HappyHorse-1.0’s current state is more like a “preliminary announcement” rather than a real open source. It may be that the team is still optimizing the model, or it may be a marketing strategy to attract attention - either way, it is not yet available for public use.

Dzine will also integrate the Happyhorse 1.0 API as soon as it is released. Besides, now you can try other popular AI image/video models such as Nano Banana 2, Nano Banana Pro, Seedance 2.0, Kling 3.0, and so on. We provide a free trial for 7 days.

Current Limitations of HappyHorse-1.0

Despite its impressive ranking, HappyHorse-1.0 still has obvious limitations that prevent it from being widely used:

1. Unavailable for download : No official download link, no model weights released, and no beta test application channel.

2. No API support : Developers cannot integrate it into their own tools or platforms, limiting its practical application scenarios.

3. Unverified commercial use : There is no clear statement on whether commercial use is allowed, and potential copyright risks exist for users who attempt to use it for commercial purposes.

4. Limited scene adaptation : As mentioned earlier, it performs well in portrait and dubbing scenarios but is weak in complex dynamic scenes and professional fields.

The Secret to Topping the Elo Leaderboard: Mechanism Analysis & Ranking Logic

Elo Rating System: A Quantitative Yardstick for User Perception

The Elo system originated from the chess rating system, and its core is to dynamically calculate model performance through user blind testing comparisons.

Its working principle is straightforward: all models start with a base Elo score (usually 1000 points). Then, users are asked to blindly select between two random videos, and their preferences are recorded.

Scores are adjusted based on expected win rates and actual results: beating a higher-rated model earns more points, while losing to a lower-rated model results in more points lost. What makes the Artificial Analysis leaderboard special is its focus on real user perception rather than technical parameters, making HappyHorse-1.0’s top ranking more valuable for industry reference.

Is the Leaderboard Biased? Potential Limitations in Evaluation

While HappyHorse-1.0’s top ranking is impressive, it is necessary to rationally view the potential biases of the Artificial Analysis leaderboard.

The biggest issue is portrait preference. The blind test samples of the leaderboard are dominated by portrait and dubbing content (over 60%), which happens to be the area where HappyHorse-1.0 is optimized.

In contrast, in scenarios such as dynamic scenes, complex backgrounds, or professional industrial videos, HappyHorse-1.0’s performance is not significantly better than that of top competitors. This means its high Elo score is partially due to the alignment between its advantages and the leaderboard’s test focus.

Competitor Comparison: How Does HappyHorse-1.0 Stack Up?

To better understand HappyHorse-1.0’s position in the industry, let’s compare it with three mainstream AI video models:

• Seedance 2.0 : Advantages in complex scene generation and API support; disadvantage is slower inference speed. HappyHorse-1.0 is faster but less versatile.

• Kling : Strong in 4K video generation and commercial authorization; the disadvantage is high usage cost. HappyHorse-1.0 (if open-sourced) may have an advantage in cost, but its quality is not yet comparable to Kling’s 4K output.

• PixVerse : Excellent in creative video generation (e.g., animations, fantasy scenes); the disadvantage is poor audio-video synchronization. HappyHorse-1.0’s synchronization capability is a clear advantage here.

Overall, HappyHorse-1.0 has its own highlights but is not yet a “comprehensive leader” - it excels in specific scenarios but lags behind competitors in others.

Who Should Pay Attention to HappyHorse-1.0?

HappyHorse-1.0 is most worthy of attention from the following groups:

1. Content creators (short videos, dubbing, portraits) : Its advantages in portrait, dubbing, and fast generation are highly matched with the needs of short video creators, YouTubers, and live streamers.

2. AI developers and researchers : If it is truly open-sourced, its 15-billion-parameter Transformer architecture and audio-video joint modeling technology have high research value.

3. Small and medium-sized enterprises (SMEs) in marketing : If it is free or low-cost after open-sourcing, it can help SMEs reduce video production costs (e.g., product promotions, brand short videos).

4. AI enthusiasts and trend followers : For those who focus on the latest developments in the AI video field, HappyHorse-1.0’s mysterious background and rapid rise make it a key observation object.

Practical Advice: How Content Creators Can Prepare in Advance

Even though HappyHorse-1.0 is not yet available, content creators can layout in advance to seize opportunities once it is released:

1. Sort out content directions : Focus on portrait, dubbing, and short video scenarios that align with HappyHorse-1.0’s advantages, and prepare script templates and material libraries in advance.

2. Optimize hardware configuration : Its lightweight design means it does not require top-tier hardware, but preparing a mid-to-high-end GPU (e.g., NVIDIA RTX 4070 or above) can ensure smooth use.

3. Follow official updates : Pay attention to GitHub, HuggingFace, and relevant AI communities to be the first to get download and usage information.

4. Test alternative tools : While waiting, practice with Seedance, Kling, etc., to master basic AI video creation skills, so you can quickly get started with HappyHorse-1.0.

Conclusion: Is HappyHorse-1.0 Worth Waiting For?

After comprehensive analysis, the answer is: Worth paying attention to, but not worth blindly waiting for.

HappyHorse-1.0 has obvious advantages in audio-video synchronization, fast generation, and portrait scenarios, and its potential is undeniable. However, its current limitations - unavailable for use, unclear open-source timeline, and limited scene adaptation - mean it cannot replace existing mature tools.

For content creators, the best strategy is to keep an eye on their official updates while continuing to use existing tools to improve their skills. Once HappyHorse-1.0 is officially released and open-sourced, you can quickly switch and seize the first-mover advantage.

For the industry, HappyHorse-1.0’s rise is a reminder: in the AI video track, “focus on user needs” is the key to breaking through. Whether it can maintain its advantage depends on whether the team can solve the current limitations and truly deliver on its open-source promise.

FAQ

Can I use HappyHorse-1.0 now?

No. As for the post published, it is not available for download or use. Dzine will also integrate the Happyhorse 1.0 API as soon as it is released.

Will it be free after release?

It is not clear yet. The official only claimed it will be open source, but open source does not necessarily mean free for commercial use. We need to wait for specific terms.

Is HappyHorse-1.0 worth paying attention to?

Yes, especially for creators focusing on portrait and short video content. Its audio-video synchronization and fast generation speed have great potential.

Is it suitable for commercial use?

Not yet. There is no clear commercial authorization statement, and using it without permission may involve copyright risks. Wait for official guidelines.

Will it surpass Sora/Veo in the future?

Unlikely in the short term. Sora/Veo have mature technical accumulation and large-scale data support. HappyHorse-1.0 has advantages in specific scenarios but lacks comprehensive strength.